A pattern, not an anomaly

Artificial intelligence is often described as neutral. But a growing body of research and increasingly, disability advocacy points to something more consistent:

AI systems are not neutral. They are selective in whose experiences they recognise and whose they overlook.

When it comes to disability, that selectivity is not subtle. It is measurable, repeatable, and increasingly documented.

A 2023 study on sentiment and toxicity models found statistically significant negative bias toward disability-related language across widely used systems. This was not a single failure. It was a pattern.

Disability organisations have reached a similar conclusion from a different direction.

Groups such as Disability Ethical AI have warned that disability is often absent from the design, testing, and governance of AI systems altogether, a form of exclusion that occurs before bias is even measured.

When AI cannot recognise harm

The issue extends beyond output into understanding.

Research comparing large language models with disabled participants found that AI systems consistently failed to identify ableism at the same level as those with lived experience. In some cases, harm was underestimated. In others, explanations lacked accuracy or nuance.

This aligns with concerns raised by advocacy groups. If disability is not meaningfully included in system design, then AI will not recognise it properly in practice. It cannot account for harm it has not been built to see.

Bias in decision-making, not just language

The consequences are not theoretical.

In hiring simulations, AI systems assessing CVs were found to rank candidates with disability indicators lower than identical candidates without them. The bias extended into reasoning, where disability was framed as a disadvantage.

Disability organisations have repeatedly warned that these outcomes are predictable.

When systems are built without disabled perspectives, they do not simply overlook them they disadvantage them.

Built on assumptions of normal

At a structural level, multiple studies point to a shared issue, AI systems are built around an assumed normal user.

Typically:

able-bodied

independent

consistent in capacity

Research has shown that AI development often reflects a medical model of disability, treating it as something to fix or minimise, rather than a lived condition to accommodate.

Advocacy groups go further.

They argue that this assumption of normality is not neutral it is exclusionary by design. If systems are built around a narrow definition of the user, anyone outside that definition becomes an afterthought.

Representation shapes outcomes

Disabled people remain underrepresented in datasets, research, and system design.

The result is predictable:

incomplete understanding

simplified outputs

reliance on stereotype

Reports from disability-focused organisations highlight that AI-generated content can distort disabled experiences, often reducing them to narrow or familiar narratives. Not because AI lacks capability. But because it lacks input from those with lived experience.

This is why disability advocacy consistently returns to one principle, nothing about us without us.

When systems scale bias

The impact becomes most visible when these systems are deployed at scale.

In the UK, an AI system used to detect welfare fraud was found to show bias linked to disability, with disabled individuals more likely to be flagged. In healthcare, studies have demonstrated that AI can reproduce disparities in treatment recommendations.

These are not edge cases. They are systems that influence access to support, care, and opportunity.

When bias exists at this level, it is no longer subtle.

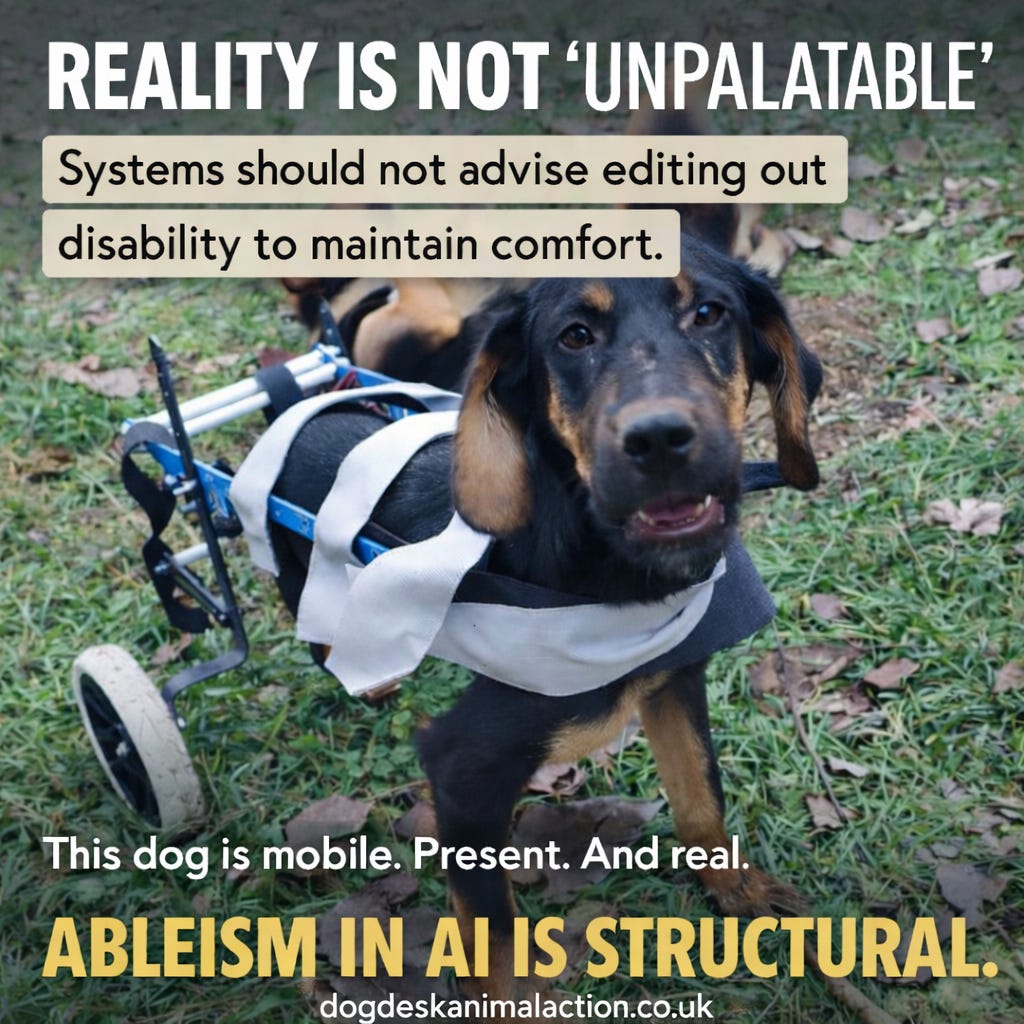

When “palatable” replaces reality

This is not only theoretical. We have experienced it directly.

In measuring & predicting content success, we were advised to remove an image of a dog using a wheelchair because it was considered less palatable for an audience.

The implication was clear. Disability should be softened. Or removed entirely.

But there is nothing unusual about that image. It reflects a dog who has adapted. A dog who is mobile, present, and living.

The discomfort does not sit with the dog. It sits with perception. Disability organisations describe this dynamic clearly.

When disability is included, it is often:

simplified

reframed

or edited to fit expectations

What falls outside that expectation is treated as something to minimise. This is not accidental. It is the same structural pattern identified across AI systems:

prioritising what is familiar, comfortable, and easy to process over what is real.

A consistent conclusion

Across research and advocacy, the findings align:

AI systems demonstrate measurable bias against disability

They struggle to recognise ableism accurately

They are built around narrow assumptions of a normal user

Disabled people are excluded from design and decision-making

These patterns result in real-world disadvantage

This consistency matters. It tells us that ableism in AI is not incidental.

It is structural.

What this reflects

AI does not operate independently of society. It reflects the priorities, assumptions, and omissions of the systems that build it. If disability is treated as something to minimise, soften, or remove, AI will reproduce that approach.

If disabled people are excluded from design, they will be excluded from outcomes. The question is not whether AI contains bias.

The question is whether we are prepared to accept systems that quietly decide which realities are acceptable to show and which are not.

Sources & Evidence

Automated Ableism: Explicit Disability Biases in Sentiment and Toxicity Models (2023)

LLM vs human evaluation of ableism (2024–2025)

Disability bias in AI hiring evaluations (2024)

Systematic review of AI and disability frameworks (2024)

Research on design-driven bias in AI systems (2022–2023)

Disability Ethical AI

AI Now Institute – Disability bias and inclusion in AI

Inclusion Scotland – AI and accessibility

NYC Bar Association – AI impact on disabled people

UK welfare fraud AI bias reporting

Healthcare AI bias studies